Eduardo Ugalde

General Director & Founder Partner. Business Strategy | Strategic Marketing & Advertising | Commercial Excellence & Operations | Business Transformation (Digital Evolution) | Commercial Project Management

Executive Summary

Apple’s June 2025 paper, “The Illusion of Thinking: Understanding the Strengths and Limitations of Reasoning Models via the Lens of Problem Complexity,” demonstrates that current Large Reasoning Models (LRMs) do not truly “reason” but instead rely on pattern-matching, with performance collapsing as problem complexity increases. This finding has profound implications for the credibility, trust, accuracy, safety, cost-effectiveness, and workforce dynamics of AI in the life sciences and healthcare industries. This comprehensive report synthesizes these impacts and analyses the risks and outcomes of replacing humans with AI in these high-stakes domains.

1. Core Findings of the Paper Relevant to Healthcare and Life Sciences

1.1. Reasoning Limitations

- LRMs generate plausible reasoning traces but often reach correct answers through superficial or inconsistent logic, especially as problem complexity rises.

- There are three regimes: non-thinking models outperform at low complexity, LRMs excel at moderate complexity, and both collapse at high complexity, regardless of increased computational resources.

1.2. Scaling and Effort Collapse

- As problems become more complex, LRMs initially increase their reasoning effort, but beyond a threshold, their reasoning effort and accuracy both collapses, even if more computational resources are available.

- Providing explicit algorithms does not help models overcome these failures, indicating fundamental limitations in logical step execution and self-correction.

2. Impact on Key Industry Dimensions

2.1. Credibility and Trust-ability

- Transparency and Explainability: The illusion of reasoning undermines trust, as clinicians and regulators require not just accurate outputs but also auditable, logical rationales for medical decisions.

- Risk: If AI models “appear” to reason but actually rely on pattern-matching, trust in their recommendations erodes, especially when errors surface in novel or complex cases.

Example: A diagnostic AI provides a confident answer with an elaborate reasoning trace, but if this trace is not grounded in genuine logic, clinicians may lose trust — particularly if the AI fails in rare disease scenarios.

2.2. Accuracy and Capabilities

- Limits Under Complexity: LRMs’ performance collapses as problem complexity increases, making them unreliable for multi-step clinical reasoning, rare disease diagnosis, or complex treatment planning.

- Generalization Risk: Models may perform well on routine cases but become unreliable for less-represented or complex scenarios, endangering patient safety and clinical efficacy.

Example: An AI may recommend standard treatments accurately for common conditions but fail for patients with rare comorbidities, leading to harmful recommendations.

2.3. Safety

- Overconfidence and Hidden Failures: The appearance of reasoning can lead to overconfidence in AI outputs, masking errors and increasing risk in clinical contexts.

- Bias and Generalizability: Models may underperform for underrepresented groups or novel clinical scenarios, threatening equitable and safe care.

Example: A clinical decision-support AI might confidently suggest an incorrect drug dosage for a paediatric patient, lacking true understanding of pharmacological principles.

2.4. Cost-Effectiveness

- Resource Demands: Increasing model size or compute does not guarantee improved reasoning or reliability in complex tasks.

- Hidden Costs: Failures in deployment, need for manual review, frequent retraining (capabilities and competencies), and regulatory compliance can erode anticipated ROI.

Example: A hospital invests heavily in an AI diagnostic tool but discovers it requires extensive manual oversight for complex cases, negating efficiency gains.

3. Risks of Replacing Humans with AI

3.1. Loss of Human Judgment and Intuition

- AI lacks the nuanced, intuitive judgment developed by clinicians, especially in unpredictable or complex cases where subtle cues or holistic understanding are essential.

Example: AI may overlook rare comorbidities or psychosocial factors that a human would catch, leading to misdiagnosis or inappropriate care.

3.2. Erosion of the Patient-Doctor Relationship

- Human interaction is central to trust, empathy, and patient satisfaction; replacing clinicians with AI risks eroding this relationship and negatively impacting adherence and outcomes.

Example: Patients may be less willing to share sensitive information with an AI, resulting in incomplete histories and suboptimal care.

3.3. Over-Reliance on Imperfect AI Reasoning

- Current models can appear logical but often rely on pattern-matching, leading to hidden errors and overconfidence in recommendations.

Example: A diagnostic AI might generate plausible explanations but fail catastrophically in novel cases, while clinicians over-trust its outputs.

3.4. Safety, Accountability, and Regulatory Challenges

- AI systems are not always transparent or explainable, complicating error tracing and accountability, and raising legal and ethical concerns.

Example: If an AI misdiagnoses a patient, responsibility may be unclear — vendor, provider, institution, or clinicians.

3.5. Data Privacy and Security

- AI requires vast amounts of sensitive data, increasing the risk of privacy breaches and regulatory penalties.

Example: Cyberattacks on hospital AI systems have exposed patient records, highlighting the need for robust security.

3.6. Workforce Displacement and Skill Decay

- Replacing humans with AI can lead to job loss and erosion of clinical skills, making it difficult for clinicians to intervene when AI fails.

Example: As AI performs more diagnostic work, radiologists may lose proficiency, hindering effective intervention in case of AI error (loss of knowledge bases and collected experience).

4. Potential Outcomes for Business and Patients

Positive Outcomes (with Caution)

- Operational Efficiency: AI can streamline workflows, reduce length of stay, and optimize resources, yielding cost savings.

- Improved Access: AI tools can extend specialist expertise to underserved areas and support remote monitoring.

- Augmentation, Not Replacement: When used to assist (not replace) clinicians, AI can enhance decision-making, reduce errors, and improve outcomes.

Negative Outcomes (if Humans Are Replaced)

- Reduced Quality and Safety: Over-reliance on AI may increase errors in complex or atypical cases, with severe consequences for patient safety.

- Loss of Trust: Confidence in healthcare systems may erode if AI-driven errors become public or if the human touch is lost.

- Hidden Costs: Failures in AI deployment may necessitate costly manual review, retraining, or legal settlements, eroding anticipated savings.

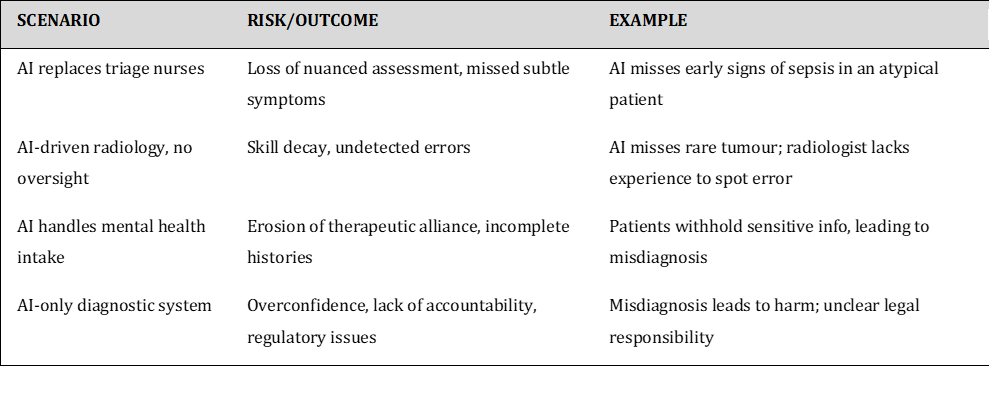

5. Illustrative Examples

6. Industry Recommendations

7. Conclusion

The “Illusion of Thinking” paper (by Apple), underscores that current reasoning models are not yet ready for unsupervised, high-stakes deployment in life sciences and healthcare. Their limitations in genuine reasoning, scalability, and explainability threaten credibility, trust, patient safety, and cost-effectiveness. Replacing humans with AI introduces significant risks — loss of judgment, diminished patient relationships, safety concerns, and hidden costs — especially considering these limitations. The most effective and safe path forward is a collaborative, hybrid approach, where AI augments human expertise, ensuring both business success and optimal patient outcomes.

Reference: The Illusion of Thinking (by Apple).