Eduardo Ugalde

General Director & Founder Partner. Business Strategy | Strategic Marketing & Advertising | Commercial Excellence & Operations | Business Transformation (Digital Evolution) | Commercial Project Management

Introduction

The 2025 NVIDIA Research paper, “Small Language Models are the Future of Agentic AI,” rethinks the foundations of how AI agents should operate across industries with strict safety, compliance, and trust requirements. It argues that the future of most agentic AI, systems that automate structured business processes, is not monolithic, general-purpose large language models (LLMs), but rather small language models (SLMs) specifically tuned for well-bounded, repetitive, and specialized tasks (Find below the reference to the NVIDIA research paper).

My article synthesizes the implications of this research for both the life science (pharmaceutical/biotech) and healthcare (patient care) sectors. It clarifies:

- The essential and irreplaceable role of human expertise in building safe, trustworthy SLM agentic applications,

- The sharp distinction between business process automation in pharma/biotech versus direct patient-facing healthcare,

- The real business applications for SLMs in physician prescription or diagnostics, with a focus on what is possible, credible, and supported by evidence,

- And why SLMs are poised to transform industry workflows while human oversight remains non-negotiable in high-stakes domains.

SLMs vs LLMs: The Core Argument

NVIDIA’s research finds that SLMs, defined as models compact enough for real-time inference on consumer hardware, are now mature enough to match or exceed LLMs in routine, narrowly-scoped tasks central to many agentic systems. SLMs offer:

- Dramatically lower operational costs (10 to 30× cheaper to run than LLMs),

- Faster inference and lower latency (enabling real-time responses even on the edge or on-device),

- Easier fine-tuning and specialization (small models adapt rapidly to new business rules or regulations),

- Enhanced privacy (running locally on-premises or on a device, crucial for sensitive data),

- Simpler, safer integration for modular task automation.

Crucially, while SLMs excel at handling well-defined subtasks, NVIDIA emphasizes that complex, ambiguous, or high-risk tasks still require human judgment and final review.

Human-in-the-Loop: Why expert oversight remains essential in Pharma/Life Science (Business Processes)

- Safety & Regulatory Demands: All SLM-generated business outputs (e.g., pre-filled regulatory reports, adverse event triage, data extraction for compliance), must be reviewed and approved by specialists before formal submission or action.

- Data Curation: Both model development (removal of patient-identifiable information, bias checks), and retraining for new scenarios depend on domain experts to ensure compliance and reliability.

- Ongoing Supervision: Human review is necessary to handle flagged exceptions or edge cases unanticipated by SLMs, and to continuously monitor for drifts in protocol or regulatory changes.

In Healthcare/Patient Care

- Escalation of Care: SLM-enabled triage bots or reminder agents can handle low-risk, scripted workflows, but any uncertainty, red flag, or patient distress must trigger hand-off to licensed clinical staff.

- Final Accountability: No responsible system, as per NVIDIA’s analysis, should ever allow SLMs to make unaudited prescription or diagnostic decisions.

- Continuous Quality & Trust: Human-in-the-loop design is vital for both patient trust and clinical safety—even more so as workflows become more digitized and scalable.

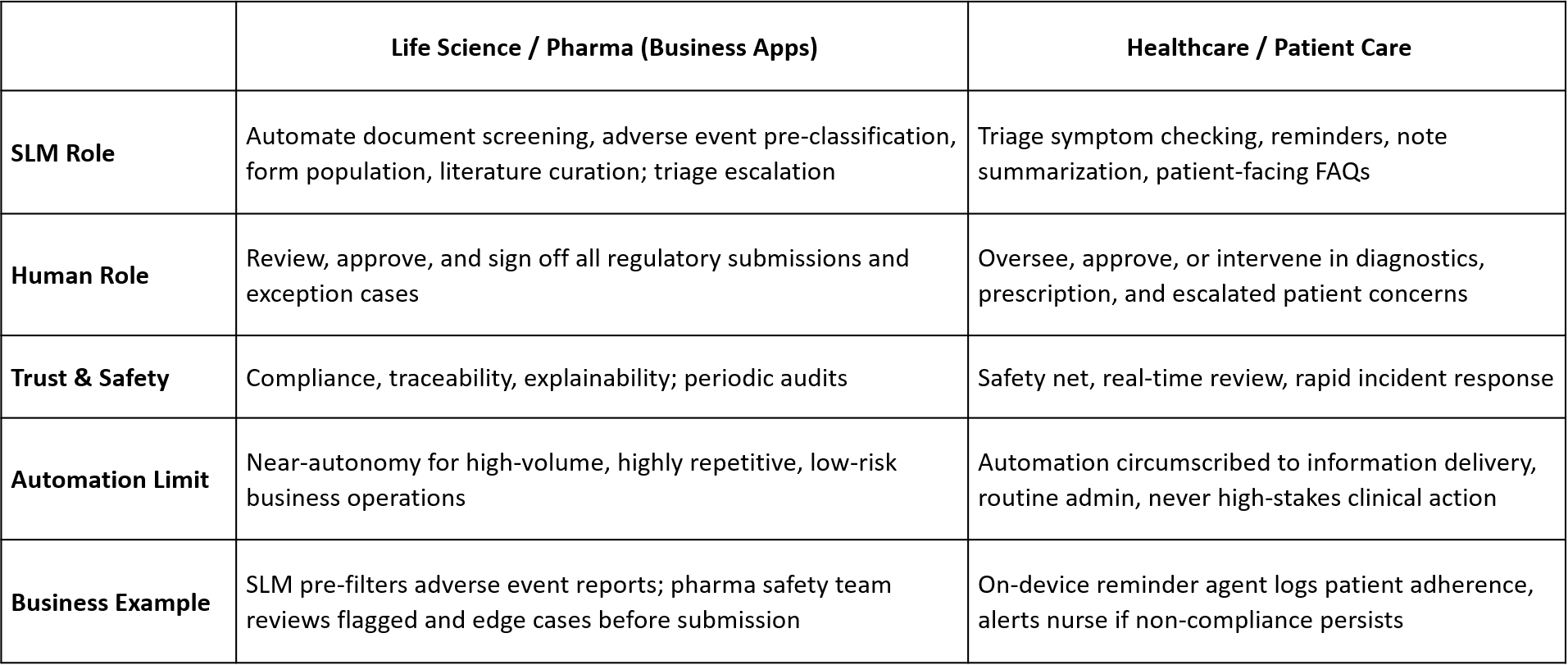

Sectoral Distinctions: Life Science vs. Healthcare

Physician Prescription and Diagnostics: What is Possible, What is Not (Supported by NVIDIA’s Research).

Appropriate SLM Business Applications:

- Prescription Drafting: SLM pre-fills e-prescription orders, applies formulary rules, flags standard warnings. The physician reviews, edits, and signs off.

- Drug Interaction Checks: SLM screens for drug-drug interactions and alerts prescribers within their workflow, but never blocks or overrides clinician judgment.

- Diagnostic Pre-Screening: SLMs classify test results by protocol (e.g., abnormal/normal, urgent/routine), pre-populate templated diagnostic notes for review.

- Patient Education Summaries: SLMs auto-generate medication or procedure instructions for patients, subject to clinician validation.

- Clinical Documentation Assistance: SLMs draft clinical summaries, discharge notes, or supporting rationales to boost productivity, pending physician approval.

“Agentic applications are interfaces to a limited subset of LM capabilities… a SLM appropriately fine-tuned for the selected prompts would suffice while having the above-mentioned benefits of increased efficiency and greater flexibility… it is essential that the generated output conform to strict formatting requirements imposed by the code invoking the LM… In such cases, it becomes unnecessary for the model to handle multiple formats … a SLM trained with a single formatting decision enforced… is preferable over a general-purpose LLM in the context of AI agents.”

NOT Supported / NOT Advised:

- SLMs as fully-autonomous prescription writers or autonomous diagnostic agents without mandatory human validation, due to safety and liability risks.

- Use for open-ended, complex, or ambiguous clinical reasoning, which LLMs (and more importantly, human experts) do better.

Why SLMs Are Especially Suited for These Roles

- Efficiency and Speed: SLMs process large volumes of repetitive cases orders-of-magnitude faster and cheaper than LLMs, making them perfect for “digital paperwork” that often burdens clinical and compliance teams.

- Fine Pinpointing: Specialized SLMs can be continuously fine-tuned to evolving business, ethical, or medical standards with relatively little data and compute.

- Modular Integration: SLMs fit “Lego-like” into existing systems, allowing selective deployment and stepwise expansion without wholesale replacement of trusted workflows.

- Privacy and Locality: By running SLMs on local devices or secure in-premise servers, organizations minimize external data leakage risks, crucial for sensitive clinical and commercial data.

The Essential Power of Human-SLM Synergy

The research’s LLM-to-SLM conversion algorithm (see Section 6) reinforces that the ultimate goal is not automation for its own sake, but a future where machines handle the volume and humans assure the value. SLMs provide reliability, consistency, and speed for the known and the knowable, but it is humans who guard the gates when the stakes are real.

“The agentic AI industry… would act as a catalyst for this transformation… the weight of advantages described [for SLMs] can plausibly overturn the present state of affairs… With advanced inference scheduling systems… [SLM] barrier [to adoption] is being reduced to a mere effect of inertia.”

Conclusion

Small Language Models are the future foundation for business-critical agentic AI in pharma/life science and healthcare, enabling scalable, efficient automation of low-variance, high-volume tasks. Yet, as NVIDIA’s research rigorously demonstrates, the value, safety, and trust of these systems depend on continuous, elevated human oversight, especially in clinical and regulatory contexts.

- For life science business apps: SLMs drive cost and speed, but all regulatory or safety actions rest on human expertise.

- For healthcare delivery: SLMs assist, but licensed professionals decide.

The future of SLM agentic AI is not about replacing experts, but about releasing their expertise by ensuring they spend less time on what machines can do, and more on what only humans can responsibly judge.

Small Language Models are the Future of Agentic AI, NVIDIA Research paper references: